Anthropic launched Claude Managed Agents in early April 2026. I took a look, built a quick POC and have some thoughts to share

Building a production-ready AI agent normally means building a lot of plumbing before you get to the interesting part:

secure sandboxing

session state

authentication

error recovery

logging

monitoring

All of that has to be built and live somewhere, and maintaining causes overheads.

Claude Managed Agents aims to take that plumbing off our plate. Anthropic hosts it. You define what the agent does, tasks, tools, guardrails, and the platform aims to handle everything underneath. Sessions can run for hours, state persists through disconnections, and every interaction, tool call, and decision is logged in the Claude Console without you having to build that visibility yourself.

Multi-agent coordination (agents spinning up and directing other agents) is also in there, currently in research preview.

Pricing sits on top of standard Claude API token rates, plus $0.08 per session-hour of active runtime. That's a meaningful number to factor in for high-frequency use cases, but for lower-volume internal tooling, it's negligible.

What it opens up

The bottleneck for most teams trying to deploy agents isn't the AI capability; it's the infrastructure cost. By the time you've built sandboxing, state management, and a sensible way to inspect what's actually happening, you can have spent days or weeks before the agent does anything useful.

Managed Agents aims to compress that timeline. The practical implication is that the gap between “we have an agent idea” and “colleagues can actually use it” gets much shorter. It also means less technical people can configure agents via the Claude Console using the guided edit feature, rather than depending on a developer for every tweak and change.

The API is already full-featured, though I should note that I kept running into compatibility issues as various features were really on different beta releases, and so I was constantly having to check and update ”anthropic-beta” headers and add parameters to requests.

curl --request GET \ --url 'https://api.anthropic.com/v1/agents/agent_011CZvKRHVxcE3p7bRTq63h8/versions?beta=true' \ --header 'anthropic-beta: managed-agents-2026-04-01' \ --header 'anthropic-version: 2023-06-01' \ --header 'content-type: application/json' \ --header 'x-api-key: sk-ant-api03-CCxxxxxxxxxxxxxxxxxxxxxxxx'

What is exposed in the Console itself is very useful. Being able to inspect every session, every tool call, and every failure mode without having to build custom logging is really handy, especially if you're deploying agents on behalf of clients and need to demonstrate what happened. There is also an evaluation tool in the console, which I have yet to try out.

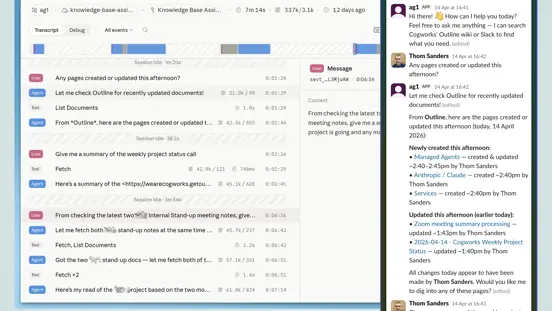

In the image below, the transcript shows a conversation in which a user asks questions via Slack, including requests for recently updated Outline pages and a project status summary, and the agent responds by invoking the Fetch and List Documents tools on Outline. Each step shows token usage, response time, and timestamps. A detail panel on the right shows the full content of a selected user message.

The Claude Console displaying a session transcript for a Managed Agents instance named 'slack-thom-1776181278.674409'.

What we built

We wanted to test the fastest path from idea to usable agent, so we kept the implementation deliberately thin.

We built a small bridge app on our internal server. Its only job is message routing. It receives messages from Slack, passes them to the Managed Agents API, and returns the response as a stream. That's it. Everything else (session management, tool execution, state) is handled by the Claude platform.

The current setup has two tools connected via MCP: Slack and Outline (our internal knowledge base). The agent can answer colleagues‘ questions by searching Slack history and retrieving content from Outline. It lives in a channel or DM thread, colleagues talk to it, it answers.

It's early days, and we're not putting Managed Agents in front of clients yet, but that wasn‘t the point. I wanted to see how quickly we could get something working, and the answer was: much faster than building it from scratch.

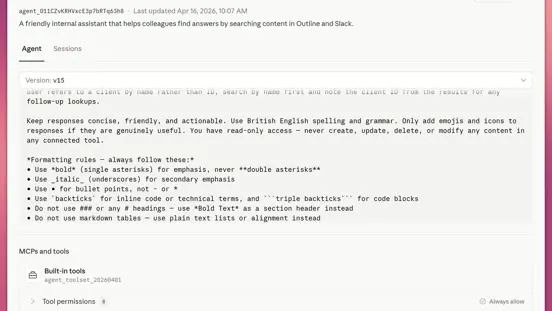

In the image below, the visible portion of the system prompt defines the agent's behaviour: read-only access, British English, concise responses, and specific Slack message formatting rules. Below the prompt, the MCPs and tools section shows Built-in tools and an Outline MCP connection, with 8 tool permissions set to always allow.

The Claude Console displaying the configuration view for an agent named 'ag1', currently on version 15 and marked Active.

Built-in memory

Worth flagging because it has the potential to change the picture quite a bit. Anthropic announced built-in memory for Managed Agents on 23rd April. It's has just gone into public beta and I have just started adding this into the mix.

The short version: Agents can now retain and build on what they've learned across sessions. Memory is stored as files on a filesystem, which means agents interact with it the same way they‘d interact with any other tool — reading, writing, organising. When creating a session, you simply add memory stores as resources and tell the agent where to mount these and provide information on how to use them.

For enterprise use, the interesting detail is in the access controls. You can have a company-wide memory store that agents can read but not write to, alongside per-user or team stores with full read/write access. Multiple agents can work against the same store concurrently. Everything is audited—you can see which agent wrote which memory, roll back to earlier versions, and inspect updates in the Console.

In practice, this means agents that get better at a specific task over time without you having to manually update their prompts or rebuild retrieval pipelines. I have found that having the agent build a cache of some tool responses can really reduce the number of tool calls required. No need to constantly look up information about team members as it changes infrequently, for example.

I'll write a follow-up once I’ve had a full play around with the possibilities.

Our take on enterprise readiness

What's good:

The governance and tracing features are useful. Full execution logging, scoped permissions, and identity management, too. If you need to be able to say “here is exactly what our agent did and why”, the Console gives you that.

Sandboxing and authentication are handled properly, and this feels like something built to run real workloads, not a demo that happens to have an API.

Tools to assist with prompt engineering and evals also give your teams a clear path to easily create and iterate on their agents.

What to think carefully about:

Multi-agent coordination is still in research preview, and built-in memory has just been released in beta. It is early days for this product, and we can expect fairly rapid development at this point, so you may find the sands shifting under your feet to some degree (though in this arena, this is hardly a novel issue!).

The $0.08/session-hour adds up if your agents are running long sessions at scale. Do the maths for your specific use case before committing.

Vendor dependency is something that must always be carefully considered. You are handing infrastructure ownership to Anthropic. For most teams, that's a reasonable trade — but it's worth mentioning.

Overall: Ready for internal tooling and experimentation now. Client-facing production deployments are credible if your use case is well-defined and your governance requirements fit what the platform provides. Complex or sensitive deployments deserve careful evaluation — as do any managed infrastructure.

What we'd advise and what we're doing

If you're an organisation that has been wanting to put agents in front of your teams but keeps hitting the infrastructure problem, this is worth a serious look. The setup overhead is absolutely lower.

Start with something internal and low-stakes: a Slack-accessible agent that can answer questions from your knowledge base is a good first candidate. It gets the team comfortable with agents as a working pattern before you're deploying them on anything critical.

We're continuing to develop our own setup, exploring what it takes to move from internal experimentation to a more structured approach. We're also thinking about where this fits alongside our existing n8n automation work and Maestro, our internal agentic workflow runner. They solve overlapping but distinct problems, and the right architecture uses both deliberately.

If you're evaluating AI agents for your organisation and want a straight conversation about what's actually deployable and what isn't, let's talk.

Innerworks and Cogworks are proud to partner with Community TechAid who aim to enable sustainable access to technology and skills needed to ensure digital inclusion for all. Any support you can give is hugely appreciated.